The way content gets made is changing fast, and the biggest driver is multimodal generative AI. Not the kind that only writes paragraphs or generates images in isolation, but systems that bring text, video, and audio together into a single, coherent creative pipeline.

For professionals in tech, product, and content, this shift is not a future trend. It is already reshaping workflows across advertising, education, film, and enterprise communication. Understanding how these systems work, and what careers are being built around them, is what separates people who react to change from those who get ahead of it.

What Is Multimodal Generative AI, Really?

The word "multimodal" gets used loosely. At its core, a multimodal generative AI system processes and generates content across more than one data type: text, image, audio, video, or some combination of all four. The critical word is "integrates." These systems do not simply stitch outputs together after the fact. They learn relationships across modalities during training, which means they can take a text prompt and produce not just a video but a contextually synced voiceover, background score, and subtitles, all at once.

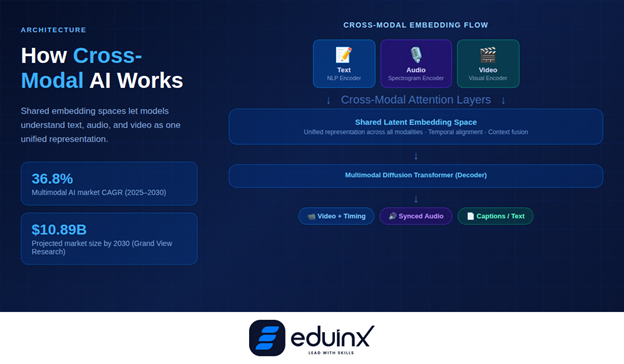

The global multimodal AI market was valued at USD 1.73 billion in 2024 and is projected to reach USD 10.89 billion by 2030., growing at a CAGR of 36.8%. That growth rate tells you something: industries are not experimenting cautiously. They are moving quickly.

How Text-Video-Audio Integration Actually Works

To appreciate what makes modern multimodal systems impressive, it helps to understand the architecture underneath.

The Foundation: Cross-Modal Embeddings

Most multimodal systems are built on transformer architectures that have been extended to handle multiple input streams. Rather than separate encoders for text and image, they use shared embedding spaces where a concept like "rain falling on glass" can be represented as a single vector that captures both the visual texture and the sound. This shared representation is what enables the model to generate a rainy scene in video, match it with the ambient audio of raindrops, and write accurate captions, all from the same latent understanding.

Models like GPT-4o, Google Gemini, and newer research architectures are all built around this idea of cross-modal attention, where the model learns which parts of a text prompt correspond to which visual or auditory features.

Text-to-Video: The Hardest Problem

Text-to-video is widely considered the most technically demanding task in multimodal generative AI, because it adds a temporal dimension that images do not have. It is not enough for each frame to look right; the frames have to be consistent across time. A character's face cannot subtly morph between seconds 3 and 7. Lighting has to remain coherent as the camera moves. Objects have to obey physics.

Tools like OpenAI's Sora and Runway's Gen-3/Gen-4 family have made significant progress here. Sora can generate videos up to a minute long while maintaining visual quality and adherence to the user's prompt, which was technically impossible just two years ago. Runway's approach emphasizes professional creative control, with tools like Director Mode that give users granular control over camera angles, motion, and lighting rather than relying entirely on natural language.

The result is a spectrum of tools: some optimized for cinematic realism, others for fast iteration in marketing and social media workflows.

🎯 Pro Tip: Prompt Engineering for Video

When prompting text-to-video models, include shot type, lighting condition, and camera movement explicitly, for example, "wide-angle shot, golden hour, slow dolly left." Vague prompts produce generic outputs. Specific cinematic language produces dramatically better results, even on consumer-grade tools.

Audio-Visual Synchronization: Where It Gets Interesting

One of the most underappreciated advances in multimodal AI is audio-visual synchronization. Earlier AI video tools were effectively silent, requiring separate post-production work to add sound. In 2025, tools like Runway Gen-3 generate synced audio alongside video, from ambient noise to voiceovers, as part of the same generation pass. Research architectures like MMAudio and AudioGen-Omni are pushing further, using temporal alignment modules to synchronize audio effects with precise moments in video, down to the timing of footsteps or object collisions.

You can see how tightly these AI production workflows connect to broader product strategy decisions, a topic Eduinx has explored in its coverage of how AI orchestration is managing complexity in modern product development.

Real Creative Applications Driving Adoption

Multimodal generative AI is not sitting in research labs. It is being used in production environments across industries.

Marketing and Advertising

"AI is not replacing the creative director; it is replacing the production bottleneck." - Rishad Tobaccowala, Former Chief Strategy Officer, Publicis Groupe

Marketing teams at major brands are using multimodal tools to produce personalized video ads at scale. L'Oréal, for instance, launched its Creaitech generative AI lab in 2025, using Google's Gemini and Veo 2 models to transform product shots into eight-second promotional videos and generate campaign visuals and animated sequences through prompt-based inputs. What would have taken a production house two weeks now takes a creative team one afternoon.

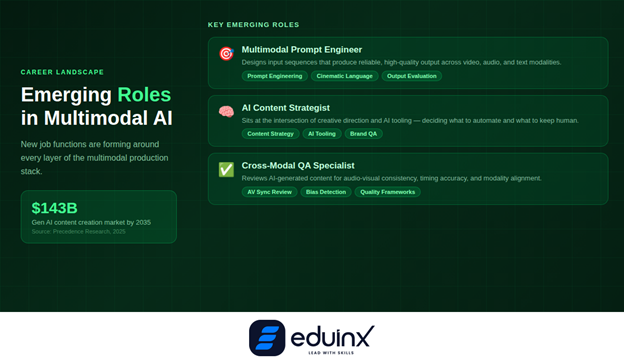

The generative AI in content creation market was valued at USD 19.75 billion in 2025 and is projected to reach USD 143.09 billion by 2035,, with the entertainment and gaming segment holding the largest share at nearly 32%.

Education and E-Learning

For edtech and corporate training, multimodal AI is solving a long-standing problem: creating good instructional video at scale is expensive and slow. With text-to-video tools, a subject matter expert can write a structured lesson in text, and the system can render it into a narrated video with synchronized visuals, captions, and supporting graphics, without a video crew.

This connects directly to how the learning industry is evolving. For professionals evaluating AI-driven content strategies, Eduinx's breakdown of rapid AI prototyping in 2026 covers how quickly these tools are going from concept to deployment.

💡 Pro Tip: Use AI for Drafts, Not Finals

In e-learning workflows, the smartest teams use multimodal AI to generate a complete first draft of a lesson video, including narration, slides, and visuals and then apply human judgment to edit, correct, and refine. This dramatically reduces production time without sacrificing quality control. The draft handles the 70%; humans handle the 30% that matters most.

Film and Storytelling

In independent film production, multimodal AI is shifting cost structures. Pre-visualization, the process of creating rough video representations of planned shots before filming used to require 3D artists and dedicated software. Now a director can generate previsualization footage from a screenplay using text-to-video tools, iterate on shot composition with natural language prompts, and share the result with a cinematographer in hours rather than weeks.

A 2025 study in Science Advances found that generative AI tools lower barriers to entry in creative work, enabling users with diverse skill sets, including those without formal artistic training, to act on their creative and imaginative ideas. That is not just a statement about democratization; it is a practical shift in who can participate in professional creative industries.

The Career Angle: Who Benefits From Learning This

If you are a working professional in tech, content, or product, the question is not whether multimodal AI will affect your field. It already has. The question is whether you are positioned to work with these systems or be displaced by teams that do.

The roles being created around multimodal AI include:

- Multimodal Prompt Engineers design input sequences that reliably produce high-quality output across video, audio, and text. This is a genuine skill, not a buzzword. Knowing how to construct a prompt that yields a cinematic 30-second product video versus a flat, generic clip is the difference between a tool that saves time and one that wastes it.

- AI Content Strategists sit at the intersection of creative direction and AI tooling. They decide which parts of a content pipeline to automate, which to keep human, and how to evaluate quality across modalities.

- Cross-Modal QA Specialists review AI-generated content for consistency: does the voiceover match the visual timing? Are captions accurate? Does the audio mood fit the visual tone? This is an emerging quality function that most organizations have not yet formalized.

For professionals looking to move into these roles, building hands-on familiarity with tools like Runway, Sora, ElevenLabs (for audio), and Gemini's multimodal APIs is the practical entry point. Theory helps; production experience is what employers actually ask for.

The skills that underpin this work, including deep learning for multimodal systems, cross-modal AI architecture, and AI content automation strategy, are increasingly showing up in job descriptions at both startups and enterprise tech teams. Platforms like Eduinx are designed for exactly this kind of skill transition, whether you are building toward a data science role or a product management position that requires fluency in AI tooling. The edge AI trends emerging in 2026 are also feeding into multimodal deployment scenarios where inference needs to happen on-device rather than in the cloud.

🚀 Pro Tip: Build a Multimodal Portfolio Project

The fastest way to stand out in job applications for AI-adjacent roles is to build one complete multimodal project: pick a topic, write a script, generate a video with synchronized narration using available tools, and document the entire workflow. This shows employers you understand the full pipeline, not just individual tools. A single strong project like this is more persuasive than three courses listed on a resume.

Limitations and Honest Challenges

Multimodal generative AI is powerful, but it is not magic. Anyone building a workflow around these tools needs to understand where they break.

- Temporal consistency remains an unsolved problem in longer video generation. Characters can drift in appearance across a two-minute video in ways that are subtle but immediately visible to trained eyes. Production teams using these tools for anything beyond short clips still need human review passes.

- Audio-visual alignment is improving rapidly but is not perfect. Lip sync in avatar-based video tools occasionally drifts by fractions of a second, which is imperceptible in ambient audio but noticeable when a presenter's speech is the focus.

- Bias in training data carries through to outputs. A multimodal model trained predominantly on certain visual styles will default to those styles unless explicitly directed otherwise. This matters for global content strategies where regional and cultural representation needs to be deliberate.

- Copyright and provenance remain contested territory. Many models were trained on datasets that include copyrighted material, and the legal frameworks for AI-generated content are still being established. Teams using these tools for commercial output need legal review as part of their workflow.

Conclusion

Multimodal generative AI is not a single technology. It is a convergence: text understanding, video synthesis, audio generation, and cross-modal alignment, combined into systems that are genuinely changing how creative and educational content gets made.

For professionals in tech and product, the practical implication is clear: the teams that understand how to work with these tools, not just use them, are the ones who will own the most interesting work in the next three to five years. Start with one tool, build one project, document what you learn, and iterate from there. The learning curve is steeper than it looks in demos, and that gap is exactly where career opportunity lives.